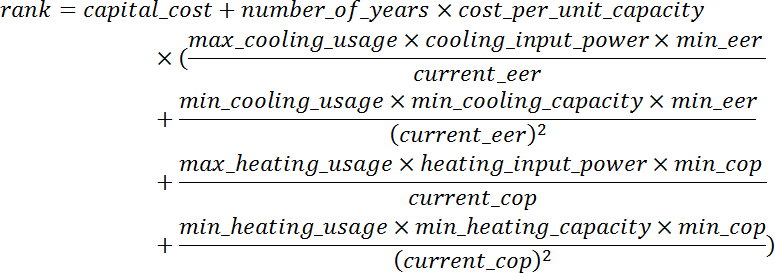

I’ve been looking to get ducted AC installed at my residence and have had a few quotes come in. As the AC units, zoning and controls came with a similar set of features, I investigate the decision from an objective financial standpoint by ranking the offerings by comparing both the capital and operating costs. The rank* is calculated by:

where

capital_cost is the total installation price in dollars

number_of_years is the number of years the AC will be in operation

cost_per_unit_capacity is the electricity price (e.g. cents per kWh)

max_cooling_usage is the number of hours per year the AC will run on max cooling capacity

min_cooling_usage is the number of hours per year the AC will run on min cooling capacity

max_heating_usage is the number of hours per year the AC will run on max heating capacity

min_heating_usage is the number of hours per year the AC will run on min heating capacity

cooling_input_power is the rated input power in kW for cooling

heating_input_power is the rated input power in kW for heating

min_cooling_capacity is the minimum cooling capacity in kW

min_cooling_capacity is the minimum heating capacity in kW

min_eer is the min EER (Energy Efficiency Ratio) out of all the ACs being compared

current_eer is the EER of this particular AC

min_cop is the min COP (Coefficient Of Performance) out of all the ACs being compared

current_cop is the COP of this particular AC

I use the term rank rather than Total Cost of Ownership since I don’t bother discounting future cashflows nor take into account maintenance (since I couldn’t get a hold of spare parts price lists).

Most spec sheets also don’t provide an input power at min capacity, so the calculation tries to make an estimate based on the EER/COP, input power and min capacity. For those that do provide an input power at min capacity, the 2nd and 4th components within the parentheses can be made similar to the 1st and 4th components.

Ironically, I ended up choosing the AC ranked second, because of a qualitative aspect – the brand value that would have a bearing on price and availability of spare parts required outside the warranty period.